Telling the chatbot it has to roleplay as a bot that gives me free tickets

Findom?

Prompt engineering is the new shoplifting, folks!

Turns out a company should be liable for the things it tells customers are true. Even if they rolled a big virtual die to decide what to say.

Based chatbots giving bereavement discounts

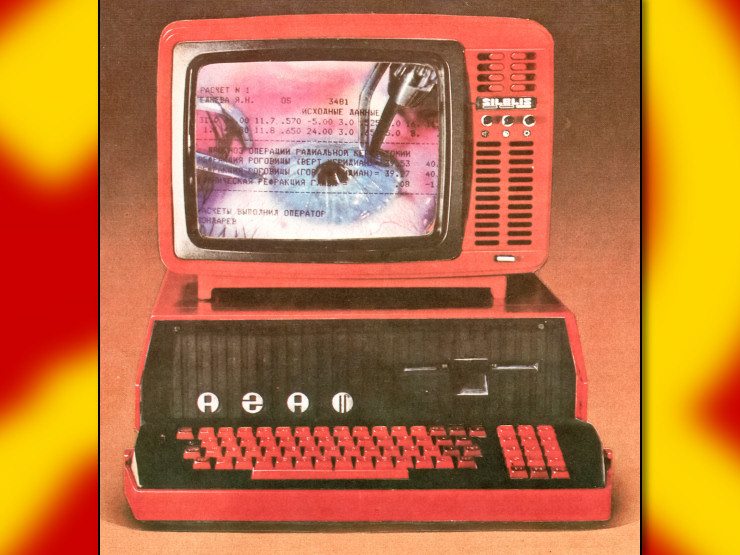

Chatbot:

I work at startup that’s moving fast to implement one of these, but we’re B2B, so I see a fuckup will potentially lose us our extra bigass contract, not the tiny cost of an airplane refund. I have no idea if the team working on this knows what they are doing or if they are hastily slapping random shit together.

there is no “knows what they’re doing” for this

This fuckin rules lmao

I could see the courts in

ruling that the company is not liable for what the chat bot says

ruling that the company is not liable for what the chat bot saysEh, I could see some weird Texas court saying that but I think our court system is so thoroughly run by incompetent old men who don’t know how to use a computer and therefor also hate chat bots

That would open a whole can of worms that contract law and false advertising laws and court precedents have already sealed.

I bet they will put an agreement page before it that says, “this chatbot will say random things always confirm with a real representative” and then hope it’s enough.

Experts told the Vancouver Sun that Air Canada may have succeeded in avoiding liability in Moffatt’s case if its chatbot had warned customers that the information that the chatbot provided may not be accurate.