- 17 Posts

- 97 Comments

21·2 months ago

21·2 months agoI can recommend some stuff I’ve been using myself :

- Dolibarr as an ERP + CRM : requires some work to configure initially. As most (if not all) features are disabled by default, it requires enabling them based on what you need. It also has a marketplace with a bunch of modules you can buy

- Gitea to manage codebases for customer projects. It can also do CI but I’ve not looked into it yet

- Prometheus and its ecosystem (mostly promtail and grafana) for monitoring and alerting

- docker mail server : makes it quite easy to self host a full mail server. The guides in their doc made it painless for me to configure dmarc/SPF/other stuff that make e-mail notoriously hard to host

- Cal.com as a self hostable alternative to calendly

- Authentik for single sign-on and centralized permission management

- plausible for lightweight analytics

- a mix of wireguard, iptables and nginx to basically achieve the same as cloudflare proxying and tunnels

I design, deploy and maintain such infrastructures for my own customers, so feel free to DM me with more details about your business if you need help with this

11·3 months ago

11·3 months agoI did not read the link, but two of my biggest concerns do not appear in the summary you provided :

- the burden of hosting an ActivityPub enabled service is often duplicated for each instance instead of being split between them (for example, my Lemmy instance has a large picture folder and database because it is replicating all posts from communities I’m subscribed to)

- it’s a privacy nightmare. All instance admins now have as much spying power as the single centralized service it is replacing

(Edit: typo)

7·3 months ago

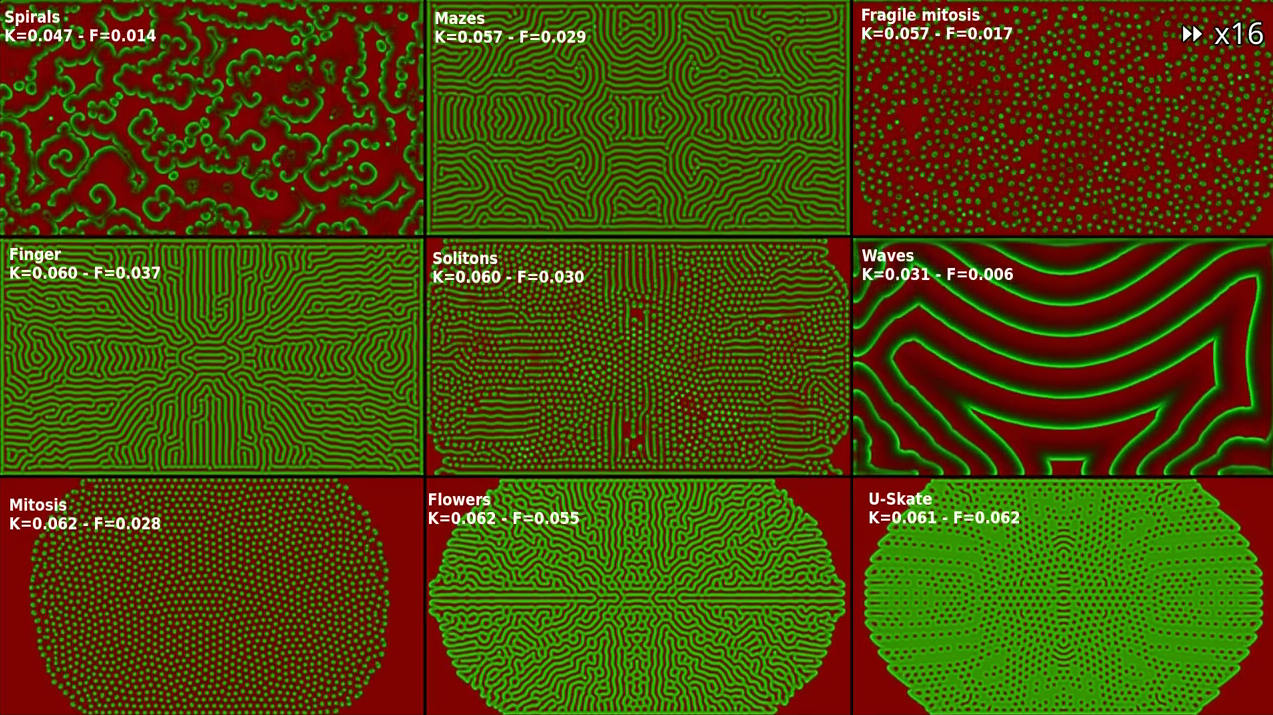

7·3 months agoIt’s a server that hosts map data for the whole world, and sends map fragments (tiles)as pictures for the coordinates and zoom levels that clients request from them

5·3 months ago

5·3 months agoAre you talking about Nginx Plus ? It seems to be a commercial product built on top of Nginx

5·3 months ago

5·3 months agoAccording to the Wikipedia article, “Nginx is free and open-source software, released under the terms of the 2-clause BSD license”

Do you have any source about it going proprietary ?

6·3 months ago

6·3 months agoIt’s still available in Debian’s default repositories, so it must still be open source (at least the version that’s packaged for Debian)

4·3 months ago

4·3 months agoThere have been some changes in a few recent releases related to the concerns I raised :

- the default tile provider is now hosted by the Immich’s team using protomaps (still uses vloudflare though)

- a new onboarding step providing the option to disable the map feature and clarifying the implications of leaving it enabled has been added

- the documentation has been updated to clarify how to change the map provider, and includes this guide as a community guide

11·3 months ago

11·3 months agoI really like the idea about grouped communities with deduplication

29·3 months ago

29·3 months agoIn my experience, OnlyOffice has the best compatibility with M$ Office. You should try it if you haven’t

11·3 months ago

11·3 months agoIt’s not that I don’t believe you, I was genuinely interested in knowing more. I don’t understand what’s so “precious” about a random stranger’s thought on the internet if it’s not backed up with any source.

Moreover, I did try searching around for this and could not find any result that seemed to answer my question.

5·3 months ago

5·3 months agoWhy do you trust NordVPN more than your ISP ? Is your ISP known to be especially bad ?

81·3 months ago

81·3 months agoCan you give examples of countries where mainstream media is not owned by billionaires ?

2 years ago was already amazing for someone who tried to play CS 1.6 and trackmania using wine 18 years ago

31·3 months ago

31·3 months agoWhat I did is use a wildcard subdomain and certificate. This way, only

pierre-couy.frand*.pierre-couy.frever show up in the transparency logs. Since I’m using pi-hole with carefully chosen upstream DNS servers, passive DNS replication services do not seem to pick up my subdomains (but even subdomains I share with some relatives who probably use their ISP’s default DNS do not show up)This obviously only works if all your subdomains go to the same IP. I’ve achieved something similar to cloudflare tunnels using a combination of nginx and wireguard on a cheap VPS (I want to write a tutorial about this when I find some time). One side benefit of this setup is that I usually don’t need to fiddle with my DNS zone to set up a new subdomains : all I need to do is add a new nginx config file with a

serversection.Some scanners will still try to brute-force subdomains. I simply block any IP that hits my VPS with a

Hostheader containing a subdomain I did not configure

892·3 months ago

892·3 months agoOn this day, exactly 12 years ago (9:30 EDT 1 Aug 2012), was the most expensive software bug ever, in both terms of dollars per second and total lost. The company managed to pare losses through the heroics of Goldman Sachs, and “only” lost $457 million (which led to its dissolution).

Devs were tasked with porting their HFT bot to an upcoming NYSE API service that was announced to go live less than a 33 days in the future. So they started a death march sprint of 80 hour weeks. The HFT bot was written in C++. Because they didn’t want to have to recompile once, the lead architect decided to keep the same exact class and method signature for their PowerPeg::trade() method, which was their automated testing bot that they had been using since 2003. This also meant that they did not have to update the WSDL for the clients that used the bot, either.

They ripped out the old dead code and put in the new code. Code that actually called real logic, instead of the test code, which was designed, by default, to buy the highest offer given to it.

They tested it, they wrote unit tests, everything looked good. So they decided to deploy it at 8 AM EST, 90 minutes before market open. QA testers tested it in prod, gave the all clear. Everyone was really happy. They’d done it. They’d made the tight deadline and deployed with just 90 minutes to spare…

They immediately went to a sprint standup and then sprint retro meeting. Per their office policy, they left their phones (on mute) at their desks.

During the retro, the markets opened at 9:30 EDT, and the new bot went WILD (!!) It just started buying the highest offer offered for all of the stocks in its buy list. The markets didn’t react very abnormally, becuase it just looked like they were bullish. But they were buying about $5 million shares per second… Within 2 minutes, the warning alarms were going on in their internal banking sector… a huge percentage of their $2.5 billion in operating cash was being depleted, and fast!

So many people tried to contact the devs, but they were in a remote office in Hoboken due to the high price of realestate in Manhattan. And their phones were off and no one was at their computer.

The CEO was seen getting people to run through the halls of the building, yelling, and finally the devs noticed. 11 minutes ahd gone by and the bots had bought over $3 billion of stock. The total cash reserves were depleted. The compnay was in SERIOUS trouble…

None of the devs could find the source of the bug. The CEO, desperate, asked for solutions. “KILL THE SERVERS!!” one of the devs shouted!!

They got techs @ the datacenter next to the NYSE building to find all 8 servers that ran the bots and DESTROYED them with fireaxes. Just ripping the wires out… And finally, after 37 minutes, the bots stopped trading. Total paper loss: $10.8 billion.

The SEC + NYSE refused to rewind the trades for all but 6 stocks, the on paper losses were still at $8 billion. No way they coudl pay. Goldman Sachs stepped in and offered to buy all the stocks @ a for-profit price of $457 million, which they agreed to. All in all, the company lost close to $500 million and all of its corporate clients left, and it went out of business a few weeks later.

Now what was the cause of the bug? Fat fingering human error during release.

The sysop had declined to implement CI/CD, which was still in its infancy, probably because that was his full-time job and he was making like $300,000 in 2012 dollars ($500k today). There were 8 servers that housed the bot and a few clients on the same servers.

The sysop had correctly typed out and pasted the correct rsync commands to get the new C++ binary onto the servers, except for server 5 of 8. In the 5th instance, he had an extra 5 in the server name. The rsync failed, but because he pasted all of the commands at once, he didn’t notice…

Because the code used the exact same method signature for the trade() method, server 5 was happy to buy up the most expensive offer it was given, because it was running the Sad Path test trading software. If they had changed the method signature, it wouldn’t have run and the bug wouldn’t have happened.

At 9:43 EDT, the devs decided collectively to do a “rollback” to the previous release. This was the worst possible mistake, because they added in the Power Peg dead code to the other 7 servers, causing the problems to grow exponentially. Although, it took about 3 minutes for anyone in Finance to actually inform them. At that point, more than $50 million dollars per second was being lost due to the bug.

It wasn’t until 9:58 EDT that the servers had all been destroyed that the trading stopped.

Here is a description of the aftermath:

It was not until 9:58 a.m. that Knight engineers identified the root cause and shut down SMARS on all the servers; however, the damage had been done. Knight had executed over 4 million trades in 154 stocks totaling more than 397 million shares; it assumed a net long position in 80 stocks of approximately $3.5 billion as well as a net short position in 74 stocks of approximately $3.15 billion.

28 minutes. $8.65 billion inappropriately purchased. ~1680 seconds. $5.18 million/second.

But after the rollback at 9:43, about $4.4 billion was lost. ~900 seconds. ~$49 million/second.

That was the story of how a bad software decision and fat-fingered manual production release destroyed the most profitable stock trading firm of the time, and was the most expensive software bug in human history.

2·4 months ago

2·4 months agoThanks for the details ! Still curious to know how a new instance, with an old domain and fresh keys, would be handled by other instances.

2·4 months ago

2·4 months agoI’m pretty sure they are actually hosting it. The tech is quite different (cofractal uses urls ending with

{z}/{x}/{y}, while their tile sever uses this stuff that works quite differently)

2·4 months ago

2·4 months agoThere is even a “Ignore cache” box in the devtools network tab

2·4 months ago

2·4 months agoYeah, this probably has to do with the cache. You can try opening dev tools (F12 in most browsers), go to the network tab, and browse to pathfinder.social. You should see all requests going out, including “fake requests” to content that you already have locally cached

Thank you for the link. I’ve seen it posted a few days ago.

The caching proxy for this tutorial should easily work with any tile server, including self-hosted. However, I’m not sure what the benefits would be if you are already self-hosting a tile server.

Lastly, the self-hosting documentation for OpenFreeMap mentions a 300GB of storage + 4GB of RAM requirement just for serving the tiles, which is still more than I can spare