Cops should only be allowed to drive cybertrucks.

Cops should only be allowed to drive cybertrucks.

So, if you wanna do something like that, I’d say target individual workers at the company you’re trying to get to abandon youtube. Because if you just send a letter, or something you’re not gonna get anywhere, but so many social media networks allow for targeted advertising that you could probably target everybody that works at Paypal for example, lives near their home office, and liked Kamala Harris’s instagram. That would be the way to go.

Anything that’s gonna mess with the algo is gonna require a coordinated effort of a shitload of people. Which is what should also be doing on places like reddit and social media.

Pretty sure that’s his short wife.

“Some people want pie in the sky, I just want practical solutions.”

Are these plane Boeing workers or munition Boeing workers?

It’s good for your prostate.

Looking past all the red scare/ai bullshit, it’s probably a nothingburger. Researchers funded by the Chinese equivalent of DARPA doing something they thought would be cool.

I thought the pixy stick was a preroll tube, at first.

As pointed out a couple of days ago, the Chatbot didn’t drive him to anything and even discouraged his talks of offing himself.

I don’t know that you can make that point in a way they’ll register.

Like no matter how much of a difference in sacrifice you try to portray and they’ll unironically be like

They are shameless, and so, so ignorant.

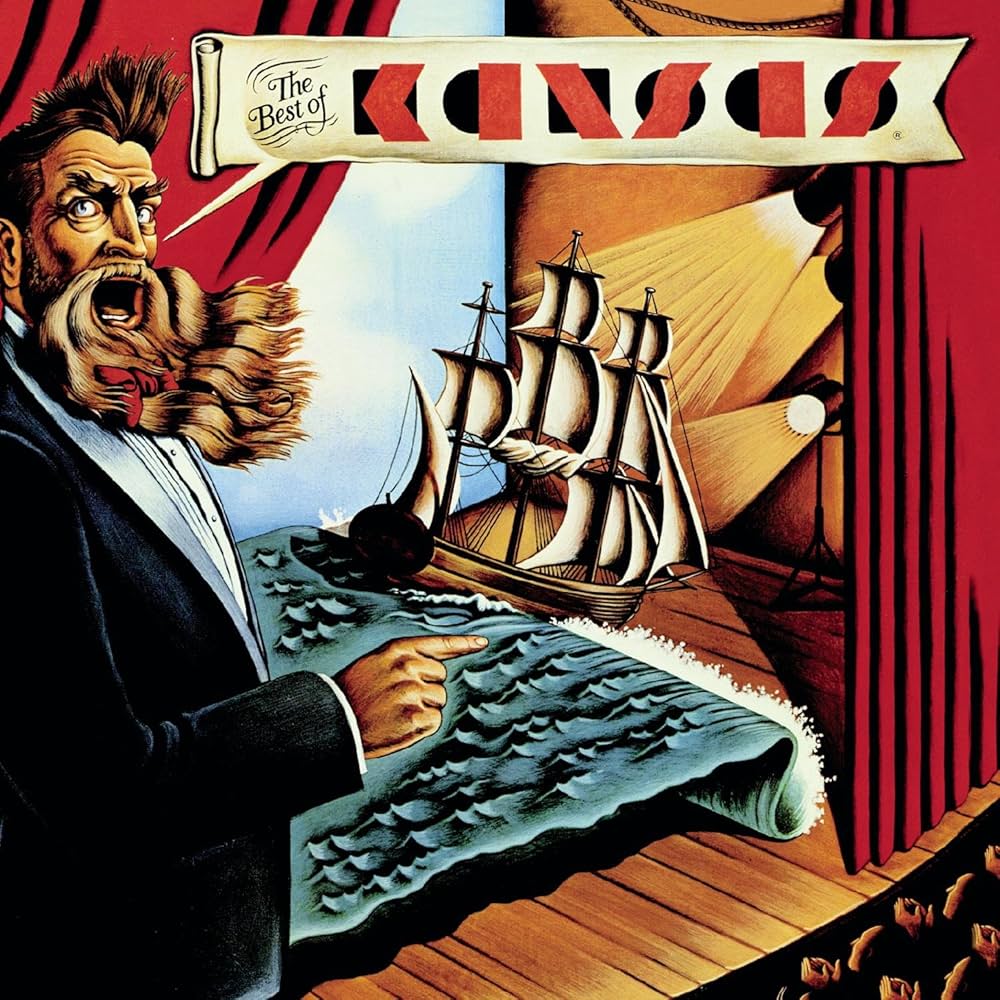

lmao. Another banger, Frank.

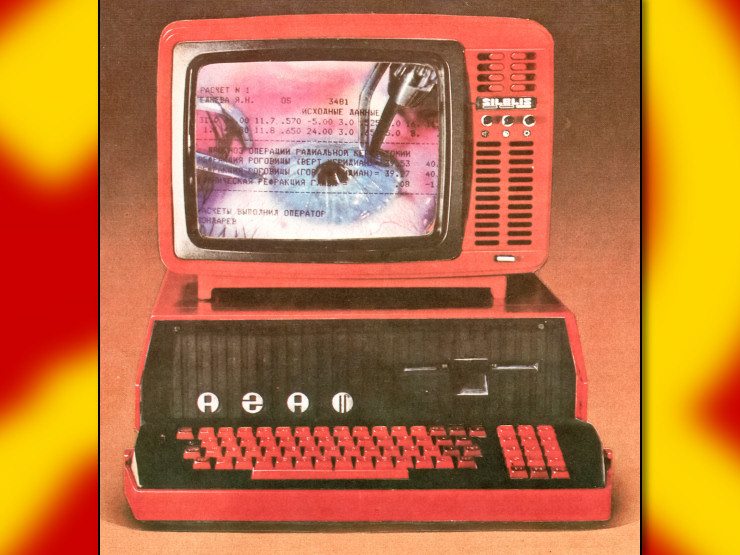

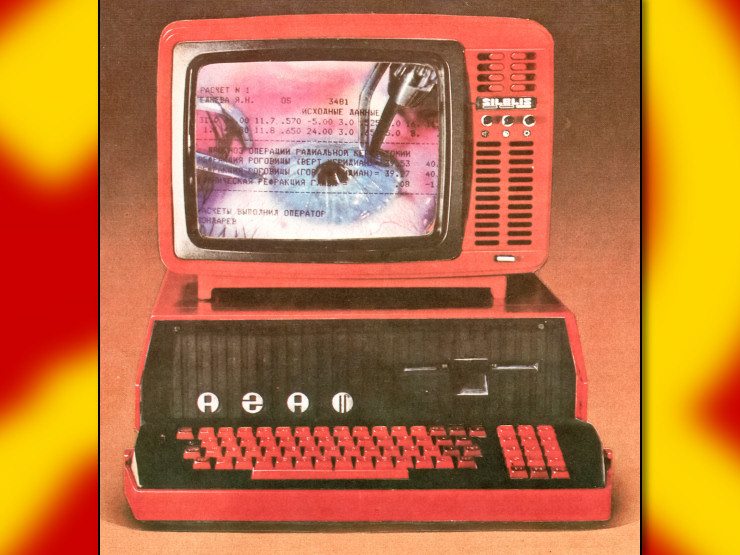

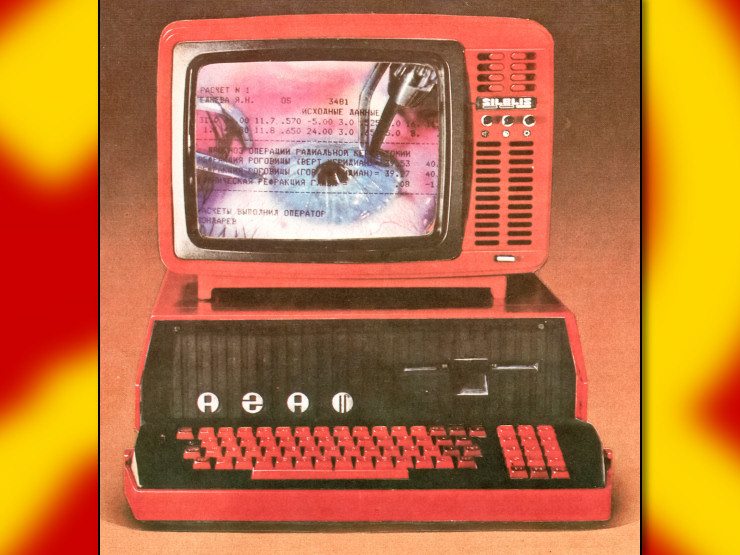

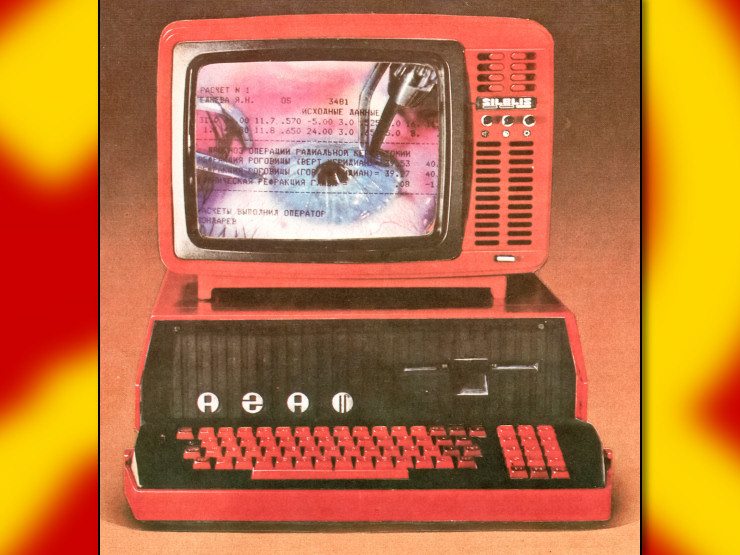

2 million in equipment is like 700 high end gaming rigs. The future is always gonna come down to organizing our resources so we can face Goliaths together.

Was there anything cool in the code they released that they weren’t supposed to?

Firefox-dev lets you run custom extensions. They just updated the GUI tho. It’s all customizable now.

Agreed, but if we make them all drive cybertrucks the problem will sort itself out.