Thousands of authors demand payment from AI companies for use of copyrighted works::Thousands of published authors are requesting payment from tech companies for the use of their copyrighted works in training artificial intelligence tools, marking the latest intellectual property critique to target AI development.

While I am rooting for authors to make sure they get what they deserve, I feel like there is a bit of a parallel to textbooks here. As an engineer if I learn about statics from a text book and then go use that knowledge to he’ll design a bridge that I and my company profit from, the textbook company can’t sue. If my textbook has a detailed example for how to build a new bridge across the Tacoma Narrows, and I use all of the same design parameters for a real Tacoma Narrows bridge, that may have much more of a case.

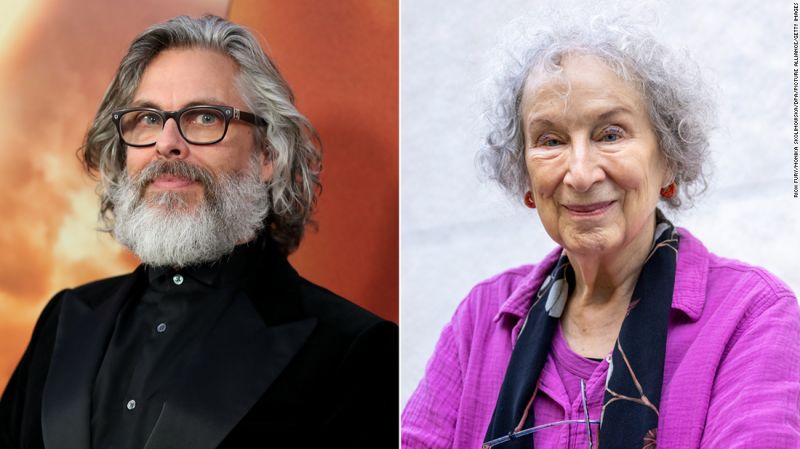

I think that these are fiction writers. The maths you’d use to design that bridge is fact and the book company merely decided how to display facts. They do not own that information, whereas the Handmaid’s Tale was the creation of Margaret Atwood and was an original work.

It’s not really a parallel.

The text books don’t have copyrights on the concepts and formulae they teach. They only have copyrights for the actual text.

If you memorize the text book and write it down 1:1 (or close to it) and then sell that text you wrote down, then you are still in violation of the copyright.

And that’s what the likes of ChatGPT are doing here. For example, ask it to output the lyrics for a song and it will spit out the whole (copyrighted) lyrics 1:1 (or very close to it). Same with pages of books.

The memorization is closer to that of a fanatic fan of the author. It usually knows the beginning of the book and the more well known passages, but not entire longer works.

By now, ChatGPT is trying to refuse to output copyrighted materials know even where it could, and though it can be tricked, they appear to have implemented a hard filter for some more well known passages, which stops generation a few words in.

Have you tried just telling it to “continue”?

Somewhere in the comments to this post I posted screenshots of me trying to get lyrics for “We will rock you” from ChatGPT. It first just spat out “Verse 1: Buddy,” and ended there. So I answered with “continue”, it spat out the next line and after the second “continue” it gave me the rest of the lyrics.

Similar story with e.g. the first chapter of Harry Potter 1 and other stuff I tried. The output is often not perfect, with a few words being wrong, but it’s very clearly a “derived work” of the original. In the view of copyright law, changing a few words here is not a valid way of getting around copyrights.

But you paid for the textbook

Libraries exist

An AI analyzes the words of a query and generates its response(s) based on word-use probabilities derived from a large corpus of copyrighted texts. This makes its output derivative of those texts in a way that someone applying knowledge learned from the texts is not.

Why, though?

Is it because we can’t explain the causal relationships between the words in the text and the human’s output or actions?

If a very good neuroscientist traced out the engineer’s brain and could prove that, actually, if it wasn’t for the comma on page 73 they wouldn’t have used exactly this kind of bolt in the bridge, now is the human’s output derivative of the text?

Any rule we make here should treat people who are animals and people who are computers the same.

And even regardless of that principle, surely a set of AI weights is either not copyrightable or else a sufficiently transformative use of almost anything that could go into it? If it decides to regurgitate what it read, that output could be infringing, same as for a human. But a mere but-for causal connection between one work and another can’t make text that would be non-infringing if written by a human suddenly infringing because it was generated automatically.

Because word-use probabilities in a text are not the same thing as the information expressed by the text.

W-what?

I think what he meant was that we should an AI the same way we treat people - if a person making a derivative work can be copyright striked, then so should an AI making a derivative work. The same rule should apply to all creators*, regardless of whether they are an AI or not.

In the future, some people might not be human. Or some people might be mostly human, but use computers to do things like fill in for pieces of their brain that got damaged.

Some people can’t regognize faces, for example, but computers are great at that now and Apple has that thing that is Google Glass but better. But a law against doing facial recognition with a computer, and allowing it to only be done with a brain, would prevent that solution from working.

And currently there are a lot of people running around trying to legislate exactly how people’s human bodies are allowed to work inside, over those people’s objections.

I think we should write laws on the principle that anybody could be a human, or a robot, or a river, or a sentient collection of bees in a trench coat, that is 100% their own business.

But the subject under discussion is large language models that exist today.

I’m sorry, but that’s ridiculous.

I have indeed made a list of ridiculous and heretofore unobserved things somebody could be. I’m trying to gesture at a principle here.

If you can’t make your own hormones, store bought should be fine. If you are bad at writing, you should be allowed to use a computer to make you good at writing now. If you don’t have legs, you should get to roll, and people should stop expecting you to have legs. None of these differences between people, or in the ways that people choose to do things, should really be important.

Is there a word for that idea? Is it just what happens to your brain when you try to read the Office of Consensus Maintenance Analog Simulation System?

The issue under discussion is whether or not LLM companies should pay royalties on the training data, not the personhood of hypothetical future AGIs.

Why should they pay royalties for letting a robot read something that they wouldn’t owe if a person read it?

You have a point but there’s a pretty big difference between something like a statistics textbook and the novel “Dune” for instance. One was specifically written to teach mostly pre-existing ideas and the other was created as entertainment to sell to a wide an audience as possible.

Plagiarism filters frequently trigger on chatgpt written books and articles.