The smartest thing Apple has done in the past decade is buy TSMC a factory. They just gave a TSMC a factory for free, with the deal being they have guaranteed time every year, no fighting.

That’s what let them make their own laptop cpus, time. The M cpus aren’t good because of arm, or apple geniuses, but rather TSMC bleeding edge tech and high yeilds. And of course, every company that didn’t buy TSMC a factory has to fight for time, meaning everyone else loses out.

The costs of being a bleeding edge chip fab make reproducing tsmc elsewhere unattainable.

Agree with apples deal but they play a huge role in designing the chips like all their components. (Ex. Apple designed Samsung screens have a reject rate of about 50% and was higher when the X came out). And their Rosetta translation layer is incredibly efficient. Took Microsoft years to develop a worse version.

I learned Rosetta is efficient because it’s backed by hardware on the M1. I saw that if you use Rosetta on Linux for example the Qualcomm emulator competes.

Can you share more about the hardware support? What I heard from Marcan that was driving the effort to port Linux to m1 is that the instruction set is the same as non apple arm. Is it memory architecture? Register set? Co processor acceleration?

Here is a great explanation on the matter.

:( it’s only available after sign up

The software aspect I won’t argue with. But I will go against the chip design. In 2024, most parts of most chips are built from library prefabs, and outside of that, all the efficiencies come from taking advantage of what the chip fab is offering.

That’s why these made up nm numbers are so important. They are effectively marketing and don’t have much basis in reality (euv wavelength is 13nm~, but we’re claiming 6 now) - what they do indicate is improvements in other aspects of lithography.

Apple aren’t the geniuses here, which is why their M chips were bested by intels euv chips as soon as Intel upgraded its fabs to be more advanced than tsmc for six months. It’s all about who’s fsb is running the bleeding edge.

bested by intels euv chips

source? last i heard intel received an euv tool from asml but certainly haven’t produced anything with it - that’s slated for next year earliest, and until it hits mass production all numbers are just marketing

intel and apple aren’t aiming for the same things - apples chip designs arent generic. they target building an apple device… which means that it will run an apple device incredibly efficiently - gpu vs gpu m series chips are fine, cpu vs cpu they’re among the top of the range, and at everything they do they’re incredibly efficient (because apple devices are about small, cool, battery-saving)… and they certainly don’t optimise for cost

what they do better than anyone else is produce an ultralight device made for running macos, or a phone made for running ios - the coprocessors etc they put onto their SoCs that offload from their generalised processors

you wouldn’t say that honeywell is “bested” by intel because intel cpus are faster… that’s not the goal of things like radiation hardened cpus

Intel is really good at making 300+ watt monster CPUs. Intel really fucking sucks at making a good laptop CPU. Apple is really good at making an incredible laptop CPU, but sucks at making a Mac Pro CPU.

Process node differences definitely play a part, but it’s almost like comparing apples to oranges.

Intel really fucking sucks at making a good laptop CPU

Which is funny, because it was the power efficiency of the P6 (Pentium III/Pentium Pro) core versus the Netburst Pentium 4 that resulted in Intel dropping Netburst and basing the Core series off of an evolution of the P6, and only reason they kept the P6 around was that Netburst was a nightmare in laptops.

ARM is good though. Low power consumption. I’m glad somebody made it more mainstream. I have a 2010 Arm Board NAS that streams video (1080), and music DLNA, hosts SMB shares, with web gui all on 256MB of RAM.

Yo that’s crazy! I was surprised to find out the Pi 4 I picked up a few years ago can’t even stream 1080p video and it has 4gb ram and a 1.8 ghz cpu

That’s weird. I have a dual core 1.3ghz atom with 1800mb of ram that can stream 1080. Are you transcoding too?

Dunno, was just trying to watch YouTube on Chromium. I tried to install Firefox to see if that helped but it did not.

I looked it up on the pi forums and from what I could tell it’s just not capable of 1080p60.

Ah, I misunderstood. I thought you meant streaming a 1080p video to play on another device, not view one on the Pi.

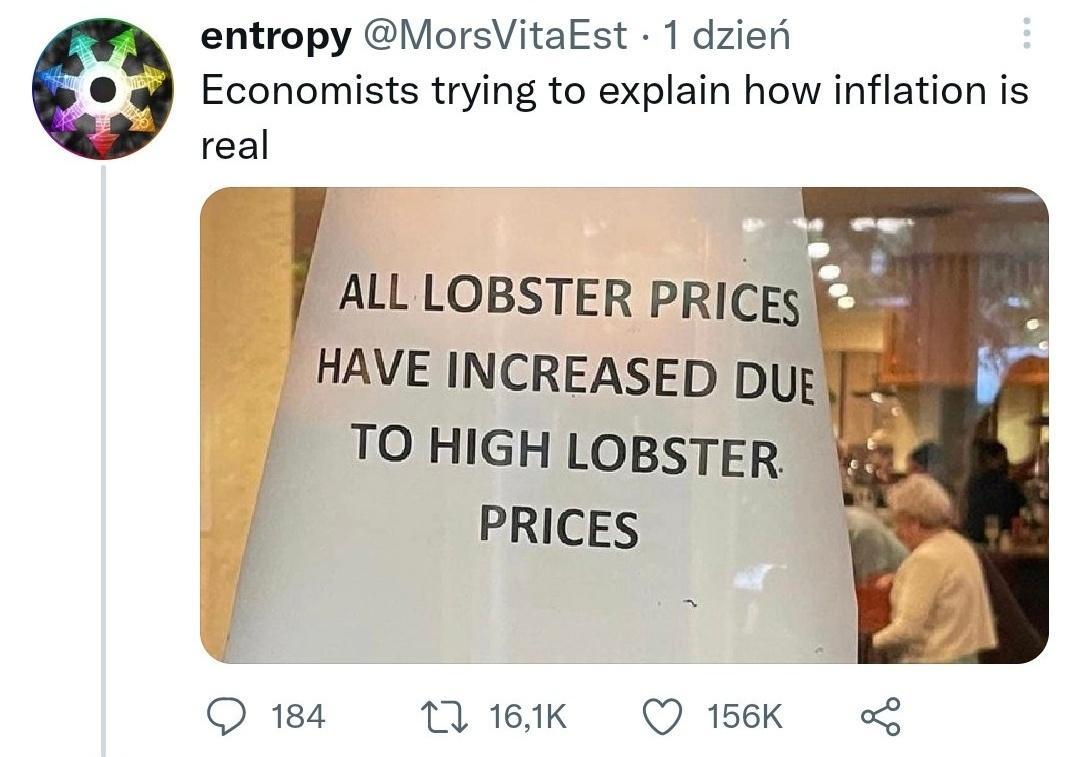

Ah why not. Everything is already very affordable might as well raise some prices. Now do my income.

We should all just stop buying shit. Maybe prices will drop again lol.

Wishful thinking…

Honestly, this is probably a better solution than you might have guessed. Especially when it comes to fake inflation price hikes.

Companies have this way of shit-testing the economy to see what you’re willing to pay. If there isn’t a significant reduction in turnover rates, then say hello to the new prices!

Prime example being NVIDIA with their bogus GPU pricing. Turns out that their shit still sells at $2000 a GPU, and people seem way to quick to accept this as the new reality.

If we all agreed that $2000 GPUs, $3000 laptops and $1500 phones are bullshit, those price points wouldn’t exist. Unfortunately we live in a world where normies are more interested in fancy features and the general public is incapable of estimating specs based on their needs. Which leaves all of us being played for absolute fools by companies manipulating the supply chain.

I’m with you on GPU prices. The old style of a high end GPU was like 600CAD. That’s what I paid for my 2000 series card. I’ll rock this until things become more sane. I ain’t payin 1k or more for a GPU. Ridiculous. You could get a console with probably the same GPU for that price.

Having dual-purposed my 3080 for both work (product marketing renders) and gaming, the cost was actually manageable and I’ve since earned back the costs. But it is what we’ve come to. Enthousiast hardware is only really feasible if you have a businesscase for it. If it doesn’t pay for itself, it doesn’t make sense.

Part of what got us into this mess is that GPUs started to become their own business case due to crypto mining. Which added a bunch of RoI value to the cards, which was ultimately reflected in their pricing. Now that consumer mining is pretty much unfeasable, we’re still seeing ridiculous pricing, and the only ways to make money using a GPU require a skillset or twisted morals (scalping).

If I were to buy something for gaming only, $1k definitely does not make any sense at all. And if that part requires at least $800 in other parts to make a full system it’s even less reasonable. Consoles are the other extreme though, and are usually sold at a loss to get you spending money on the platform instead.

On behalf of all of you in this community, fuck the status quo!

Ya I dunno. It used to be you could build a decent gaming of for 1500$. So 1k +500 in other stuff isn’t super horrible. But now a decent motherboard is 400$. Same for CPU and everything else. Chaching

Just raise everything at this point because why not

fuck apple, just for good measure